I've always been interested and intrigued by these questions, and I've also always enjoyed tinkering and building robots. Creating a machine that can interact meaningfully with a human (in a way other than a computer screen) represents a big milestone in machine interface. My goal in this project is to build an "Affective" robotic head (e.g. an expressive robot like Kismet), and examine the various behaviors that a machine can emulate in order to emotionally connect and interface with people. The final product should be a robot that can interact with its surroundings in an artificially intelligent manner, as well as emulate (through facial expressions) various emotions in an appropriate context. In pursuing this project, I hope to learn a lot about psychology, anatomy, and engineering in the designing and building process.

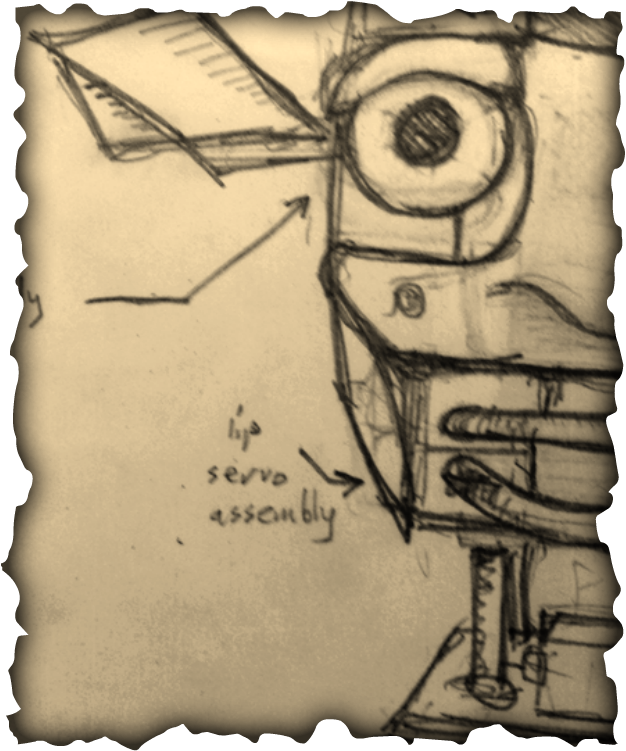

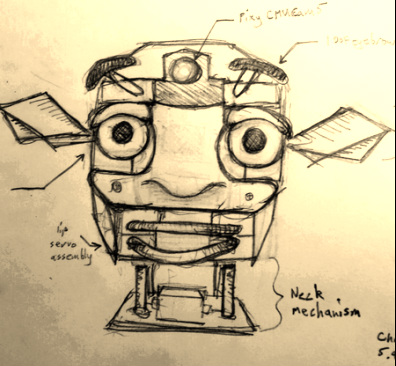

| In planning the design for any robot, I first like to draw sketches of how I envision the final product. After several generations of scribbling in my sketchbook, the following concept has emerged (right): Of course, this is only a generalized image; the real robot will most likely require some modification and deviation from the original design concept. However, it's a pretty good starting point. The robot has movable lips, eyes, eyebrows, and ears. It's main sensory input comes from the Pixy CMUCam mounted in its forehead. |

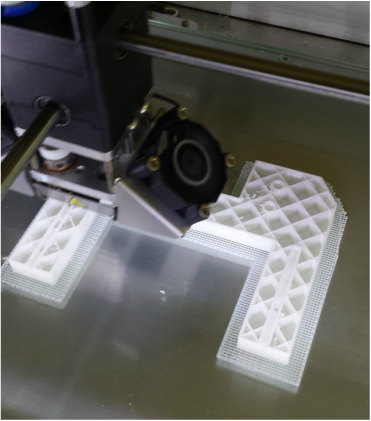

Shown left is the main support frame for most of the facial components being printed. I plan to use a modular approach in designing this robot. The eye, mouth, and ear mechanisms will be built separately, then finally integrated into one coherent product with support from the main frame.

By the way, I will not be designing the eye mechanism. I will be using thingiverse user dasaki's compact animatronic eye mechanism. You can find it by clicking here.

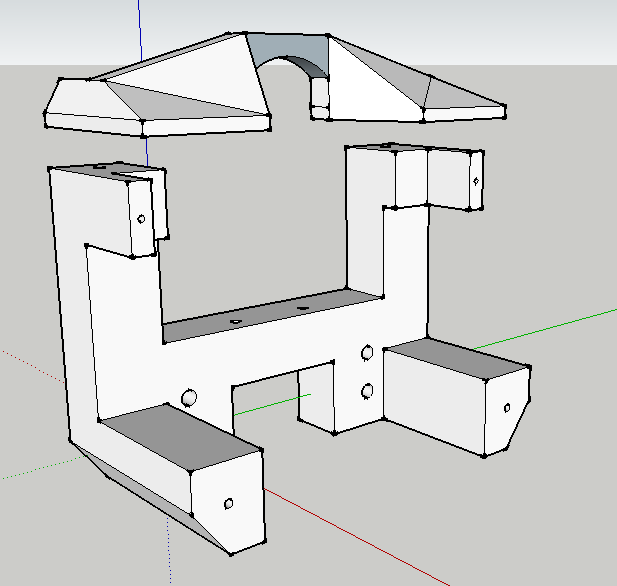

Here's a picture of the main chassis I modeled in Sketchup:

Dasaki Compact Animatronic Eyes: http://www.thingiverse.com/thing:266765

Dasaki thingiverse user profile: http://www.thingiverse.com/dasaki/designs

RSS Feed

RSS Feed