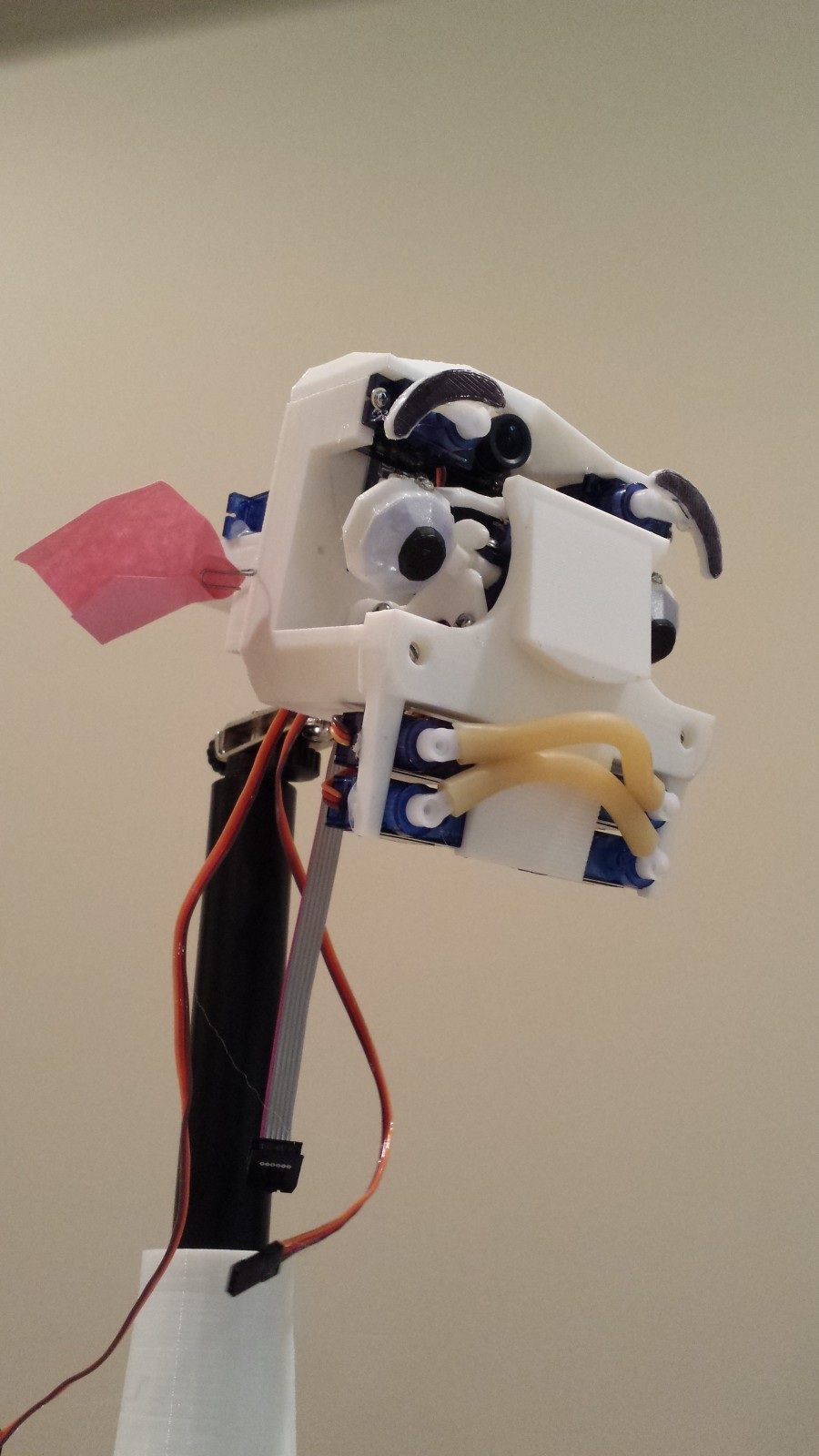

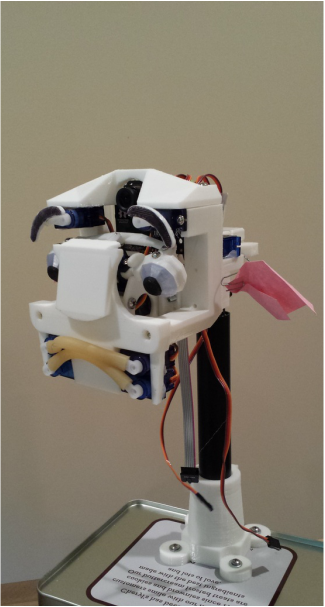

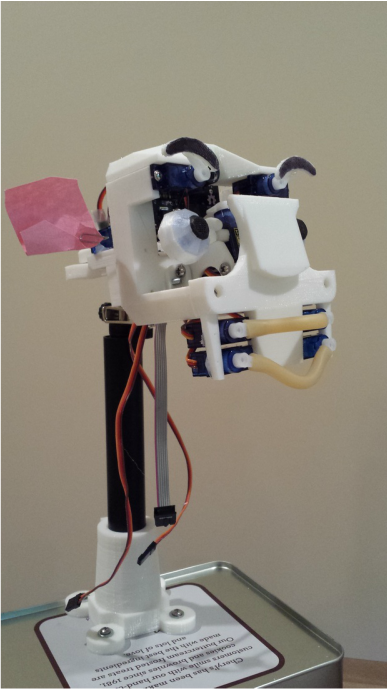

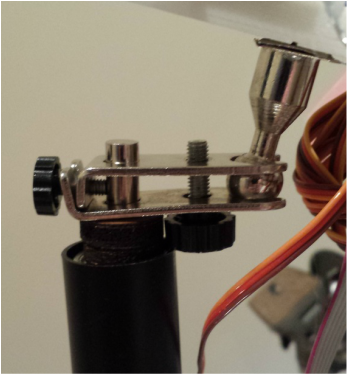

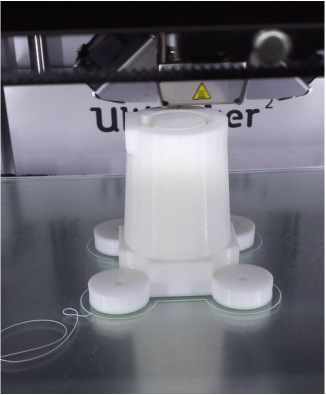

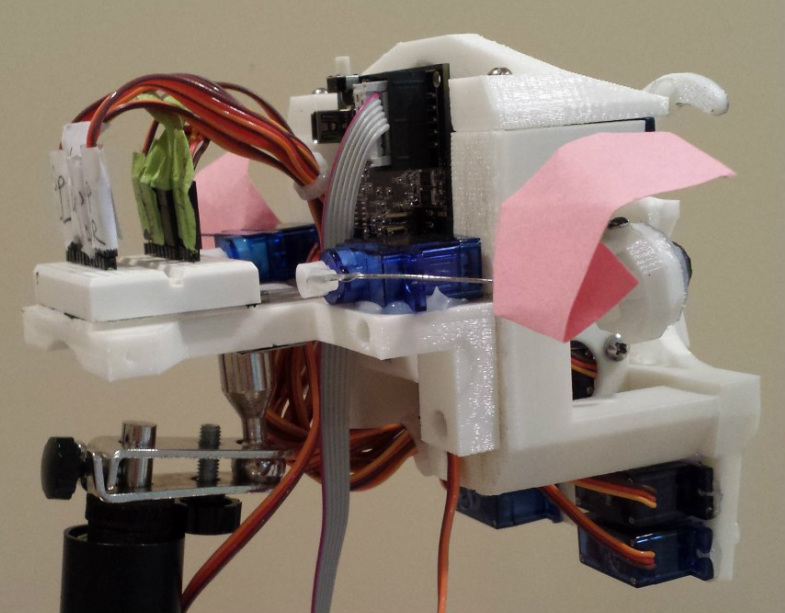

| It's been a while since I last posted an update on my project, but I've made some significant progress since my last post. Lip Assembly Perhaps the most expressive feature of this robot is the lip assembly. I thought it would be interesting if I stuck strips of surgical tubing onto pairs of servos, each controlling a corner of a lip, and the result was surprisingly effective. I’d gotten the idea for this design by observing the mechanisms used in MIT’s active-vision robot head, MERTZ. The effect is convincing enough to elicit a positive response from observers, but not realistic enough to make the effect uncanny. Neck Assembly Though not yet finished, the neck assembly is coming along much better than I would have predicted, mostly due to the fact that my parents generously donated a broken standing lamp for me to scavenge. The lamp's neck (?) came apart in hollow segments, which just happened to fit perfectly with a few spare linear bearings that I had leftover from my Printrbot RepRap. The ball-socket joint that joins the head to the neck was scavenged from an unused soldering station helping-hand (Fig. 1). The base mounting for the neck was designed and 3D printed, and fits quite snugly with the rest of the mechanism (Fig. 2). A 4-40 screw at the back fixes the lamp tube in place (not shown). Ear Assembly Finally, the ears were cut out of a pink piece of construction paper, and glued to paperclips and fastened to a servo horn behind the head. | |

|

0 Comments

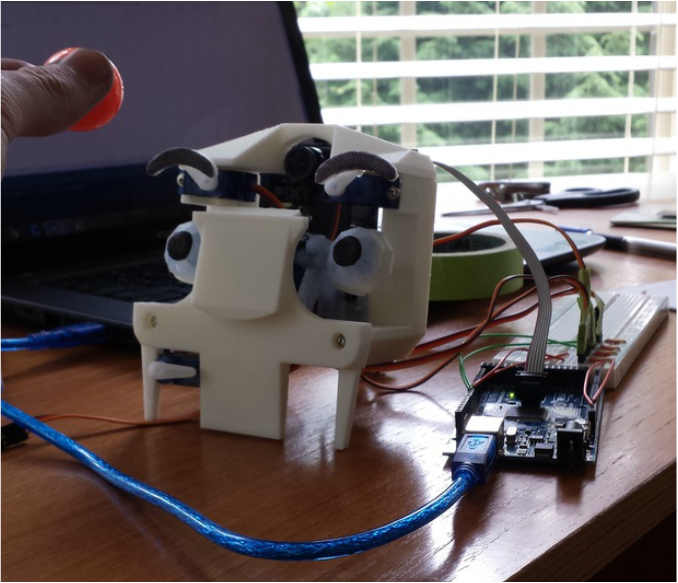

I finally got around to doing some programming! In this update, I wrote a quick Arduino sketch that uses the Pixy to send feedback about the x and y coordinates of an orange ball, and translates these coordinates into servo position values. You may notice that the eye tracking isn't perfect - the reason for this is because I simply used a linear scale factor to convert the ball position to servo degrees, when in fact, the degree of rotation for the x and y servos is not linearly correlated to the detected x and y coordinates that the Pixy sends. In order for it to be really accurate, I'd have to code in a routine to calculate the appropriate angle to turn the servos, given the x and y coordinates of the Pixy and a "magic constant" which will be measured empirically. It's a right-triangle problem, and the Pixy sends in values that effectively represent the lengths of the opposite sides of various right triangles, and the angle theta (to send to the servos) can be calculated by finding arctan(m/k). M is measured directly from Pixy value, but K must be measured and calculated empirically. I plan to calculated K by measuring the value of M when an object Pixy is tracking is exactly 45 degrees from center. Since tan(45) = 1, I can set m/k = 1 and solve for k (k = m when theta = 45). Once I calculate K, I can simply plug it in for all my other calculations, and hopefully the eye tracking will be far more accurate. Check out the video below for a demo of what robot does with the current, simple algorithm: If you have any helpful suggestions, comments, or corrections (of my math!) please leave a comment! Thanks, Chris Arduino Source Code:

***UPDATE*** After a bit more experimentation, I realized that even though the more advanced algorithm described above could fix errors in the horizontal tracking direction, it can't fix errors in the vertical direction. This is because in the vertical direction, the Pixy is physically above the eye mechanism, and would require distance sensors to calculate the correct angle for the eyes. Since Pixy only senses colors, this doesn't seem to be a possibility. However, with a little tweaking of the y scale factor, I was able to achieve sufficient tracking results using the simple algorithm.

Sometimes the simple solution is the best solution. |

AuthorHi, I'm Chris! I like to tinker and build awesome things, and I'm fascinated by anything innovative and unique. Categories

All

Archives

August 2015

|

||||||

RSS Feed

RSS Feed